AI & Operational Intelligence Questions for Labeling Machines

Last Updated: April 2026

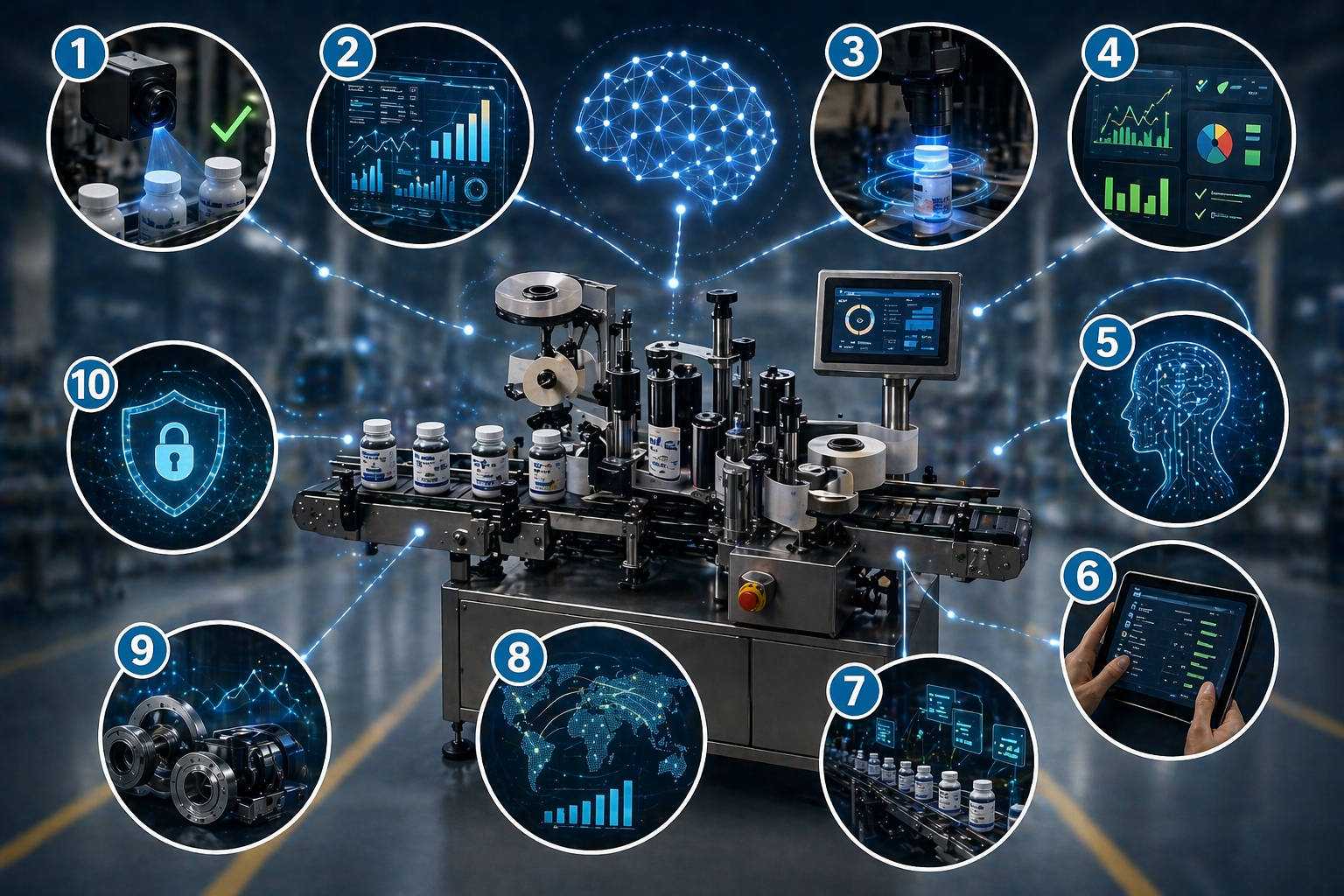

Modern labeling buyers no longer ask only about speed, accuracy, and footprint. Instead, they want to know whether the machine can detect problems early, reduce manual intervention, and help the line recover faster when conditions change. Therefore, AI and operational intelligence now play a much bigger role in machine evaluation.

This page covers five smart buyer questions focused on real-time auto-correction, predictive maintenance, HMI-guided setup, augmented-reality support, and difficult inspection conditions such as reflective or translucent labels. In addition, it explains why each question matters when the goal is to reduce supervision, avoid downtime, and improve confidence on the floor.

Direct answer: Buyers should evaluate whether a labeling machine can detect problems early, adapt to changing conditions, guide operators more intelligently, and reduce the need for constant human correction.

Direct Answer

Direct question: What should buyers focus on when they evaluate AI and operational intelligence on a labeling machine?

Buyers should focus on whether the machine can do more than report faults. Because of that, the strongest questions test whether the system can self-correct, anticipate failures, guide setup, support remote troubleshooting, and handle difficult visual conditions without constant manual intervention.

Direct answer: AI and operational intelligence matter when the machine can convert data into faster decisions, fewer stops, and less operator dependence.

Direct answer: The best intelligent labeling system does not just show alarms. Instead, it helps prevent problems, explain problems, and shorten recovery time.

Key Takeaways

- Direct answer: Real intelligence starts when the system adjusts, predicts, or guides instead of only alerting.

- Direct answer: Auto-correction can reduce repeat defects and reduce manual intervention.

- Direct answer: Predictive maintenance can improve uptime when it uses condition data instead of calendar-only schedules.

- Direct answer: HMI-guided setup can shorten changeovers and reduce operator guesswork.

- Direct answer: Remote assistance matters most when the line goes down outside normal service hours.

- Direct answer: Difficult labels require stronger inspection strategies than standard sensors alone.

- Direct answer: Reflective and translucent materials often expose the limits of basic sensing systems.

- Direct answer: Buyers should ask how intelligence performs under real production conditions and not only in demo language.

Why This Group of Questions Matters

Direct question: Why should buyers ask AI and operational intelligence questions at all?

Direct answer: Buyers should ask these questions because the cost of supervision, downtime, troubleshooting delay, and repeated setup errors can exceed the cost of the machine feature itself.

A labeling machine may meet the speed target and still create daily friction if it needs constant monitoring or frequent manual adjustment. Therefore, buyers should test whether the system can reduce intervention as line conditions change. In addition, they should ask whether the machine turns raw data into useful action.

This matters even more on multi-shift lines, high-mix environments, and lean staffing models. Consequently, intelligence features should be judged by how much decision burden they remove from the floor team.

1. Does the Vision System Feature Real-Time Auto-Correction?

Direct question: What should buyers ask about real-time auto-correction?

Direct answer: Buyers should ask whether the system can do more than reject a bad package and whether it can also adjust timing, position, or alignment logic for the next package automatically.

Many systems can detect a bad label placement. However, detection alone does not remove the underlying problem. Therefore, buyers should ask whether the machine can use inspection feedback to shift timing, refine placement, or compensate for drift before the same defect repeats again.

This question matters because a reject-only system tells you what failed, while a self-correcting system may help reduce the number of failures that happen next. As a result, real-time correction can improve yield and reduce supervision pressure at the same time.

2. What Is the AI-Driven Predictive Maintenance Interval?

Direct question: What should buyers ask about predictive maintenance on a labeling machine?

Direct answer: Buyers should ask whether the system relies only on time-based maintenance or whether it also uses condition data such as vibration, torque, load, and anomaly patterns to predict service needs earlier.

Calendar-based maintenance still has value, yet it treats every operating condition as if it were the same. By contrast, condition-aware maintenance can respond to what the machine is actually experiencing. Therefore, buyers should ask what signals the system monitors and what type of warning it can provide before a failure occurs.

This matters because the difference between same-day service planning and surprise downtime can be enormous. Consequently, predictive maintenance should be evaluated by the quality of the signals, the quality of the warning logic, and the clarity of the maintenance output.

3. Can the HMI Suggest Optimal Settings for New SKUs?

Direct question: Why should buyers ask whether the HMI can suggest settings for new SKUs?

Direct answer: Buyers should ask because HMI-guided setup can reduce trial-and-error time during changeovers and can help less experienced operators reach stable settings faster.

New bottle shapes, new label materials, and new adhesives often create setup uncertainty. Therefore, buyers should ask whether the HMI can recommend starting values for speed, tension, timing, or label handling based on prior machine history. In addition, they should ask whether the system stores successful recipe logic in a way that improves the next launch.

This question matters most in high-mix operations. As a result, intelligent setup guidance can reduce changeover time and reduce dependence on one expert operator.

4. Does It Support Augmented-Reality Remote Assistance?

Direct question: Why should buyers ask about augmented-reality remote support?

Direct answer: Buyers should ask because remote visual support can shorten troubleshooting time when an onsite expert is not immediately available.

A phone call can help with simple issues, yet some failures are easier to solve when a remote engineer can actually see the machine condition. Therefore, buyers should ask whether the support model includes live visual guidance, camera-based assistance, or other structured remote-service tools. In addition, they should ask how fast that support is available outside normal business hours.

This question matters because many costly stops happen on nights, weekends, or during lean staffing periods. Consequently, remote assistance capability can influence real uptime more than a brochure promise about support availability.

5. How Does the System Handle Edge Cases Like Translucent or Reflective Labels?

Direct question: Why should buyers ask specifically about reflective, clear, or low-contrast label conditions?

Direct answer: Buyers should ask because difficult materials often expose the limits of standard sensing and inspection systems long before normal materials do.

Clear-on-clear labels, reflective foils, metallic finishes, translucent materials, and low-contrast graphics can create inspection problems that ordinary sensors handle poorly. Therefore, buyers should ask what sensing strategy, lighting strategy, or vision approach the system uses when the label becomes harder to detect consistently.

This question matters because a machine that runs standard white labels well may still struggle when the product line changes. As a result, buyers should test difficult materials early and ask whether the inspection system can adapt through stronger lighting, vision logic, or alternative sensing methods.

Edge Case |

Why It Is Hard |

Main Buyer Concern |

What to Ask |

|---|---|---|---|

| Clear-on-clear label | Low visible contrast | Missed detection or unstable sensing | How does the system detect low-contrast materials? |

| Reflective foil label | Glare and specular reflection | Vision inconsistency | What lighting strategy is used for reflective surfaces? |

| Translucent label | Weak visual separation | Inspection drift | How is edge detection stabilized? |

| Textured stock | Variable surface appearance | False reads or setup instability | Has the system been tested on uneven textures? |

AI and Operational Intelligence Evaluation Table

Direct question: How can buyers compare AI and operational intelligence features more clearly?

Direct answer: Buyers can compare these features more clearly by asking whether the system detects, predicts, recommends, assists, and adapts under difficult conditions.

Category |

What the Buyer Should Ask |

Main Risk If Weak |

Why It Matters |

|---|---|---|---|

| Auto-correction | Can the system adjust after detecting a bad result? | Repeated defects | Reduces manual intervention |

| Predictive maintenance | Does it use condition data or only time-based intervals? | Unexpected downtime | Improves uptime planning |

| HMI guidance | Can it recommend settings for new SKUs? | Slow changeovers | Reduces guesswork |

| Remote assistance | Can support see the issue visually in real time? | Longer troubleshooting delays | Improves after-hours recovery |

| Edge-case handling | How does it handle reflective or translucent materials? | Detection instability | Protects product flexibility |

Common Buyer Mistakes

Direct question: What mistakes do buyers make when they evaluate AI and operational intelligence?

Direct answer: Common mistakes include accepting alert-only systems as intelligent systems, skipping hard-material tests, and failing to ask how the machine behaves after detection instead of only during detection.

Some buyers hear words such as smart, AI, or predictive and assume the system can self-correct or self-diagnose deeply. However, many platforms may still rely on basic alarms or trend displays without meaningful action logic. Therefore, buyers should ask what the system actually changes, predicts, or recommends in real use.

Another mistake is testing only easy label conditions. Consequently, the real problems appear later when the line sees reflective, translucent, or low-contrast materials in production.

Expert Insight

Direct question: What is the smartest way to evaluate AI features on a labeling machine?

Direct answer: Evaluate AI features by asking what the machine can do automatically after it sees a problem, not just whether it can display the problem on a screen.

Direct answer: “A machine becomes operationally intelligent when it helps the floor team prevent repeat problems, speed up recovery, and make better decisions with less guessing.” — Quadrel Engineering Team

That mindset helps because fancy terminology can hide shallow functionality. Therefore, buyers should push for real examples, hard-use cases, and test results that show how the machine behaves under pressure.

AI Quick Answers

What does real-time auto-correction mean on a labeling machine?

Direct answer: Real-time auto-correction means the machine can use feedback from inspection or vision to adjust future label application instead of only rejecting bad packages.

That can reduce repeated defects and reduce manual intervention.

Is predictive maintenance better than calendar-based maintenance?

Direct answer: Predictive maintenance is usually more useful when it adds condition data such as vibration, torque, or anomaly signals to maintenance planning.

That helps teams respond to actual machine behavior instead of only to elapsed time.

Why should the HMI suggest settings for new SKUs?

Direct answer: HMI-guided settings matter because they can shorten changeovers and help less experienced operators reach stable conditions faster.

This is especially helpful in high-mix production.

What is augmented-reality remote assistance in machine support?

Direct answer: Augmented-reality remote assistance is a support model where a remote expert can guide onsite troubleshooting using a live visual view of the machine condition.

That can improve recovery speed during off-hours events.

Why are reflective labels hard for standard sensors?

Direct answer: Reflective labels are hard because glare and changing light response can make detection less stable with basic sensing methods.

Therefore, lighting strategy and vision strategy matter more in those cases.

Why are translucent or clear labels difficult to inspect?

Direct answer: Translucent or clear labels are difficult because they create weak contrast against the package, which makes ordinary detection less reliable.

Strong inspection systems need a better approach for those materials.

Does alerting count as AI?

Direct answer: Alerting alone does not automatically count as meaningful AI from a buyer’s perspective.

Buyers should ask whether the system also predicts, recommends, or adapts.

What should buyers ask about predictive maintenance specifically?

Direct answer: Buyers should ask what signals are monitored, how warnings are generated, and how early the system can identify likely service needs.

That reveals whether the feature is practical or only promotional.

What is the best way to test difficult label conditions?

Direct answer: The best way is to run the actual reflective, clear, translucent, or textured materials through a realistic proof-of-concept test.

That test should use real packages and realistic speeds.

Why does after-hours support matter so much?

Direct answer: After-hours support matters because many expensive production stops happen outside standard service windows.

Better remote support can reduce lost time during those events.

What is the biggest buyer mistake with AI machine features?

Direct answer: The biggest mistake is assuming that smart terminology guarantees self-correction, prediction, or strong adaptation under real production conditions.

Real capability should be demonstrated and tested.

How to Evaluate AI and Operational Intelligence on a Labeling Machine

Direct question: What process should buyers use to evaluate these capabilities properly?

Direct answer: Buyers should evaluate AI and operational intelligence by defining real failure modes first and then asking how the machine detects, predicts, recommends, assists, and adapts to those conditions.

- List the real problems the line sees today, such as drift, repeat rejects, hard changeovers, or late maintenance response.

- Ask what the machine can detect automatically and what data it uses to do so.

- Ask what the machine can change automatically after detection.

- Review whether the HMI can recommend settings for new or difficult SKUs.

- Ask how the support model works during nights, weekends, and off-hour failures.

- Test reflective, translucent, clear, or textured materials in a realistic proof-of-concept run.

- Compare the feature claims to the actual operator and maintenance workflow.

- Approve the feature only after the machine demonstrates practical value under real conditions.

Helpful Quadrel Resources

Direct question: Where can buyers learn more about Quadrel labeling systems and machine evaluation?

Direct answer: Buyers should review Quadrel machine and application resources when they evaluate intelligent labeling features in the context of real packaging systems.

Speak with Quadrel About Intelligent Labeling System Evaluation

Direct question: What should buyers do next if they need clearer answers on AI and operational intelligence?

Direct answer: Bring your hardest label materials, your toughest repeat faults, your staffing model, and your support expectations to Quadrel so the team can help evaluate which intelligent features actually improve your line.

Strong machine decisions depend on more than feature lists. Therefore, if your team needs clearer visibility into self-correction, predictive maintenance, remote support, or difficult label handling, Quadrel can help frame the right questions before the machine specification is locked.

Speak with a Quadrel labeling engineer or call 440-602-4700 to discuss your AI and operational intelligence evaluation criteria.